Roughly 11 million people visit Hong Kong’s country parks each year. Most do so safely, returning home with stories and photos of the stunning beauty of nature. But what about the occasional hiker who wanders off the beaten path and into danger?

Although rare, such instances are more common than you might think. Despite being one of the densest urban centres in the world, some 40% of Hong Kong’s land is occupied by sometimes rugged, mountainous country parks.

The size of these parks, combined with their dense vegetation and tree cover, poses significant challenges to search and rescue efforts: In 2023, a high schooler was found alive after a weeklong search of Ma On Shan Country Park – an effort that saw rescuers try everything from dogs to aerial photography.

But what if there was another option? Intrigued by the challenges involved in using drones for search and rescue, Weiying Hou, a PhD student in Professor Chenshu Wu’s lab at the University of Hong Kong, has come up with a novel solution: outfitting an off-the-shelf drone with a device known as a Luneburg lens, allowing it to home in on and connect to the victim’s Wi-Fi signal from hundreds of metres away, regardless of forest cover.

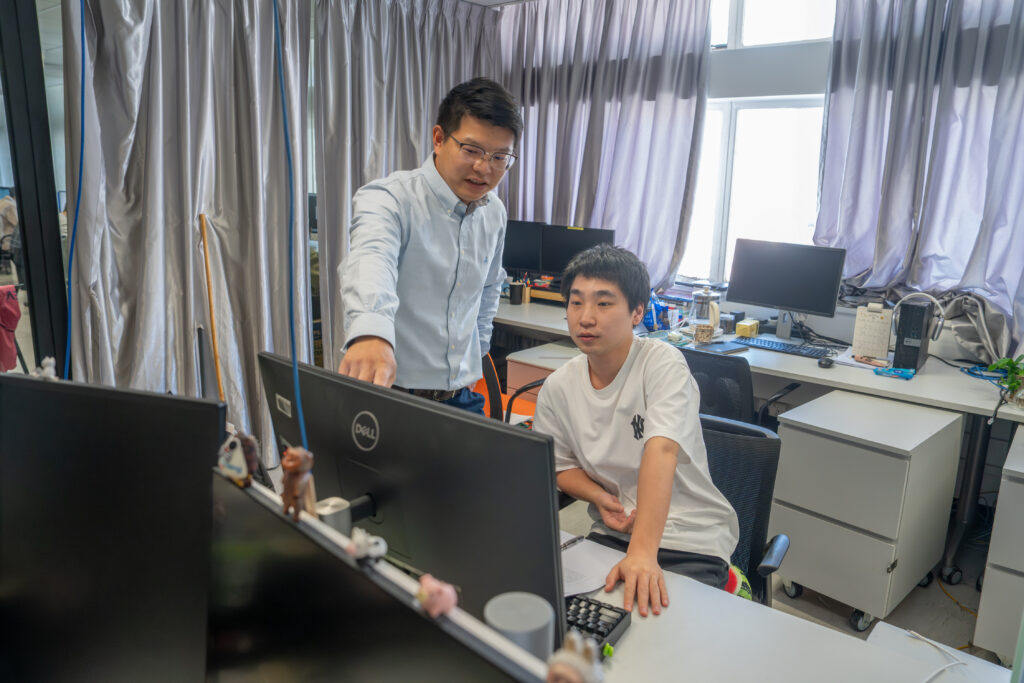

If that sounds simple, it’s not. The breakthrough took years of hard work and tinkering to achieve, as Hou, Wu, and another PhD student, Luca Jiang-Tao Yu, reengineered network cards, mastered the intricacies of 3D printing, and learned more than they ever thought they’d need to know about how to pilot a drone.

Now that work is paying off: The team’s device has been accepted by the prestigious MobiCom 2026 conference. Perhaps more importantly to Hou and the team, it’s also attracted interest from wildlife search and rescue groups.

“If more people can be saved using our technology, our hard work will have been worth it,” Hou says.

Humble beginnings

While the Wi-Fi drone may seem like a natural fit for Professor Wu’s HKU AIOT Lab, the original outline of the idea came, not from an engineer, but an undergraduate at the University’s School of Nursing.

The student, who was active in wilderness search and rescue groups, had tried cobbling together their own version using store-bought parts, but lacked the know-how and technical vision to make it work. After the idea was referred to HKU’s Inno Wing, which aims to give engineering students the resources and support needed to explore ideas with potential real-world applications, it eventually made its way to Professor Wu’s lab.

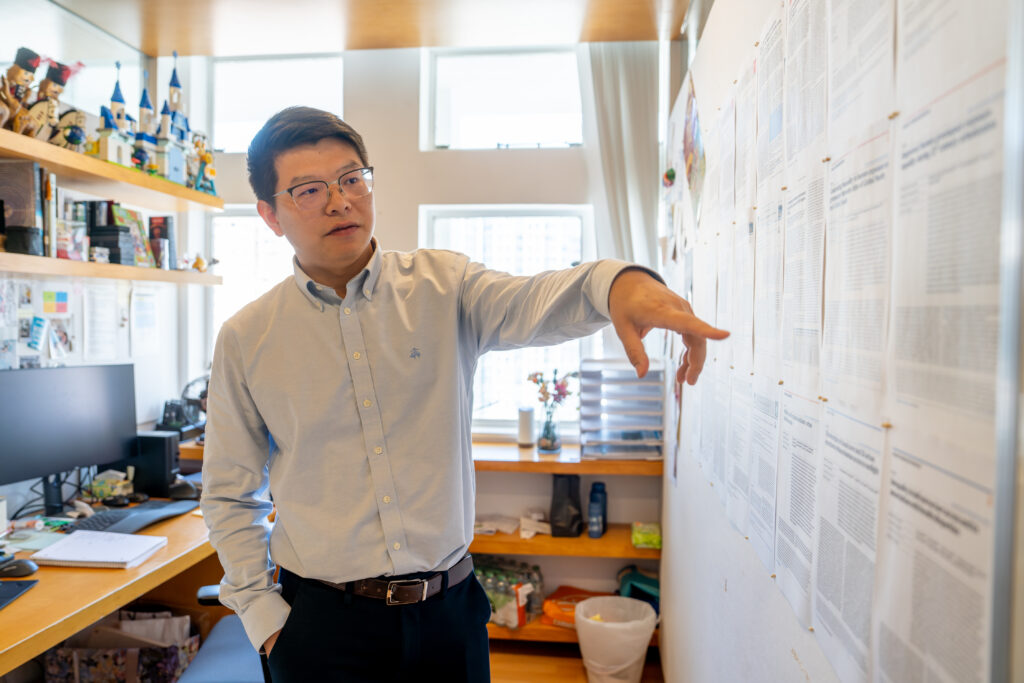

At first glance, the concept was straightforward – and a good match for Professor Wu’s expertise in the Internet of Things. The drone would be programmed to mimic a missing person’s home Wi-Fi network – a unique signal that simplifies identification and does not require line of sight. Once a connection is established, the drone could quickly triangulate the phone’s position and move overhead.

The devil was in the details, however. In order for the drone to be effective, it needed to be able to search for a signal in all directions. Hou started by designing a cone – similar to a satellite dish – but it couldn’t provide the 360° coverage he needed. He would need to restart his search.

“This was before ChatGPT,” he recalls with a laugh.

Play ball

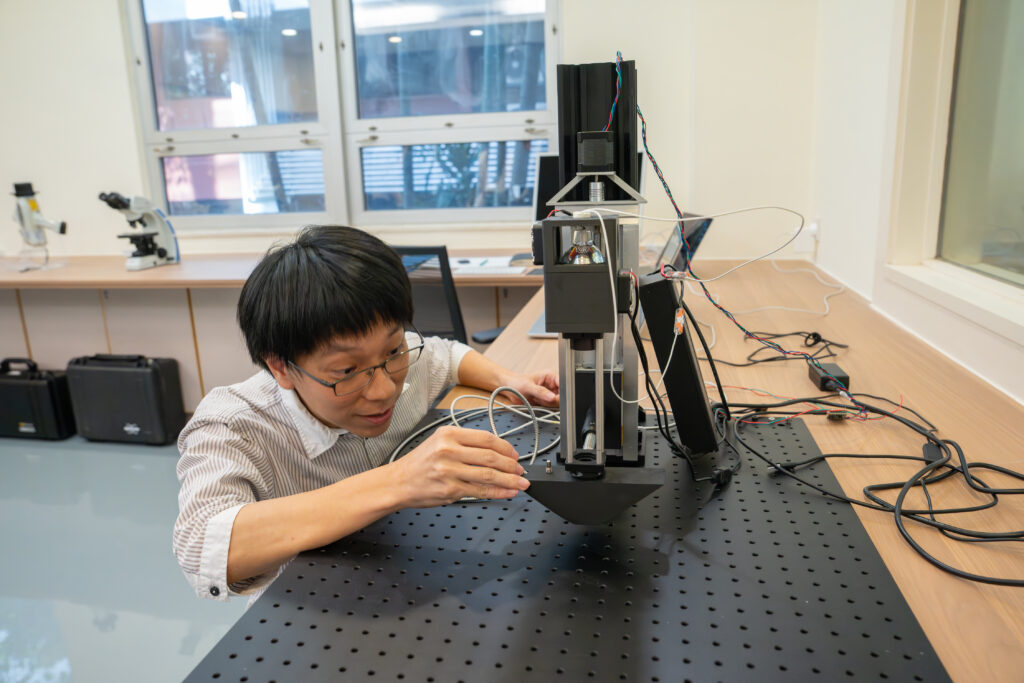

After six months of research, Hou found the solution on Wikipedia: a Luneburg lens, a spherical device that would allow the drone to pinpoint signals regardless of what direction it was facing.

That was just the beginning, according to Professor Wu. His lab had no experience working with Luneburg lenses, and Hou had to learn everything about them from scratch. “We were like primary school students,” Professor Wu recalls.

First came a crash-course in 3D printing, so Hou could prototype his lens. Then there were engineering problems to tackle: The lens had to be light, to avoid draining the drone’s battery. It also needed to be able to pinpoint a signal instantly, in case the victim’s phone was dying, and then feed the directional data back into the drone controller to form a closed control loop. And how do you attach a large sphere to a drone without impacting its flight-worthiness?

Other challenges followed. Network cards had to be reprogrammed to work in concert – a job Professor Wu called “not intellectually challenging, but extremely tedious” – and a motherboard programmed to operate them.

Finally, the team had a working prototype. But when they sent it up into the sky, they received another nasty shock: the drone crashed on its very first test flight.

Working the problem

The failure, which Professor Wu estimates set them back HKD60,000, is just part of the engineering process, he says.

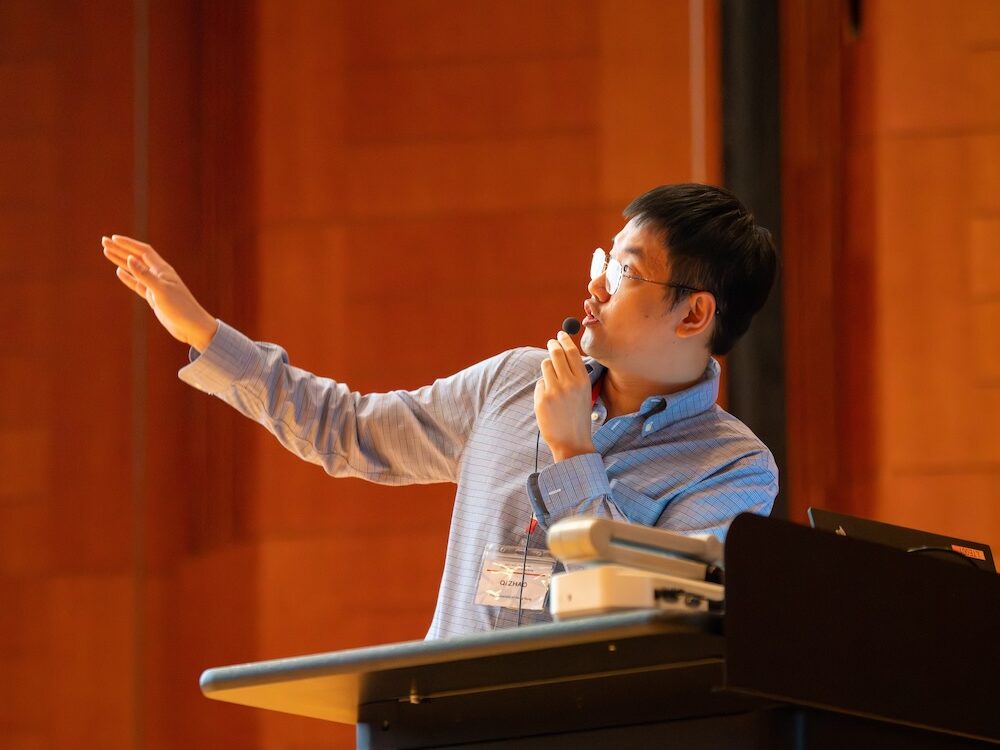

The team quickly rebuilt their device atop a bigger, more reliable drone. This time, the flight went smoothly, validating Hou’s years of work. In the months since, their design has been accepted by MobiCom and tested by mountain search and rescue teams in Hong Kong. Hou also won a presentation award at the Second Low-Altitude Summit.

The next step is upgrading the drone to support more wireless signals and dedicated tags. What won’t change is the team’s ethos: Everything is open-source, and Hou’s long-term goal is for mountain rescue teams around the world to be able to hack together their own versions using readily available components and free software.

“Everything is designed to be easy to implement,” he notes. “The lens can be replicated by anyone with a commercial 3D printer.”

For Wu, the drone’s success could open new pathways in the low-altitude economy, a key initiative for Hong Kong in the coming years, while the underlying tech has exciting potential applications in ocean monitoring, drone tracking, and other fields.

It’s also a validation of his lab’s approach to engineering. “We work on real problems,” he says. “In engineering, there’s an emphasis on novelty, but you always need to be thinking about how your work translates to the real world. What is the problem you’re solving? That’s what resonates.”

Hou agrees. “A good research problem is a real problem,” he says. While he acknowledges the drone needs some tweaks, he remains committed to his original mission.

“Hiking is extremely popular in Hong Kong, and sometimes people get lost,” he says. “I just wanted to make hiking in Hong Kong safer.”