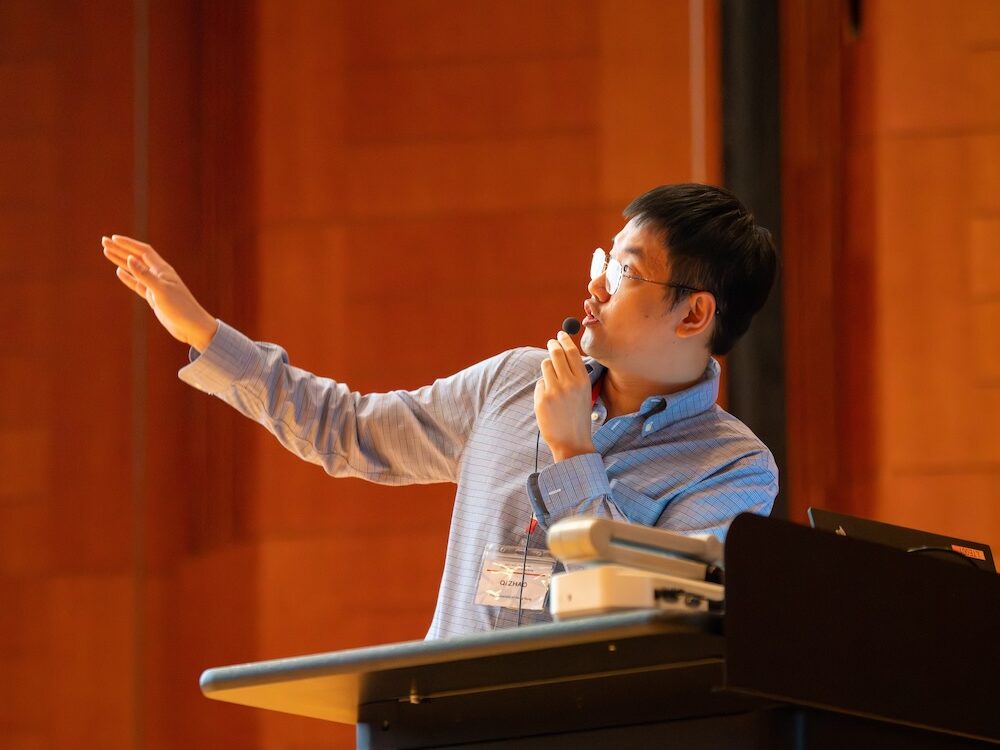

“Quantum computers don’t just calculate. They learn from the rules of nature itself.” — Prof. Qi Zhao

Artificial intelligence is everywhere — in our phones, our cities, and the tools we use to think.

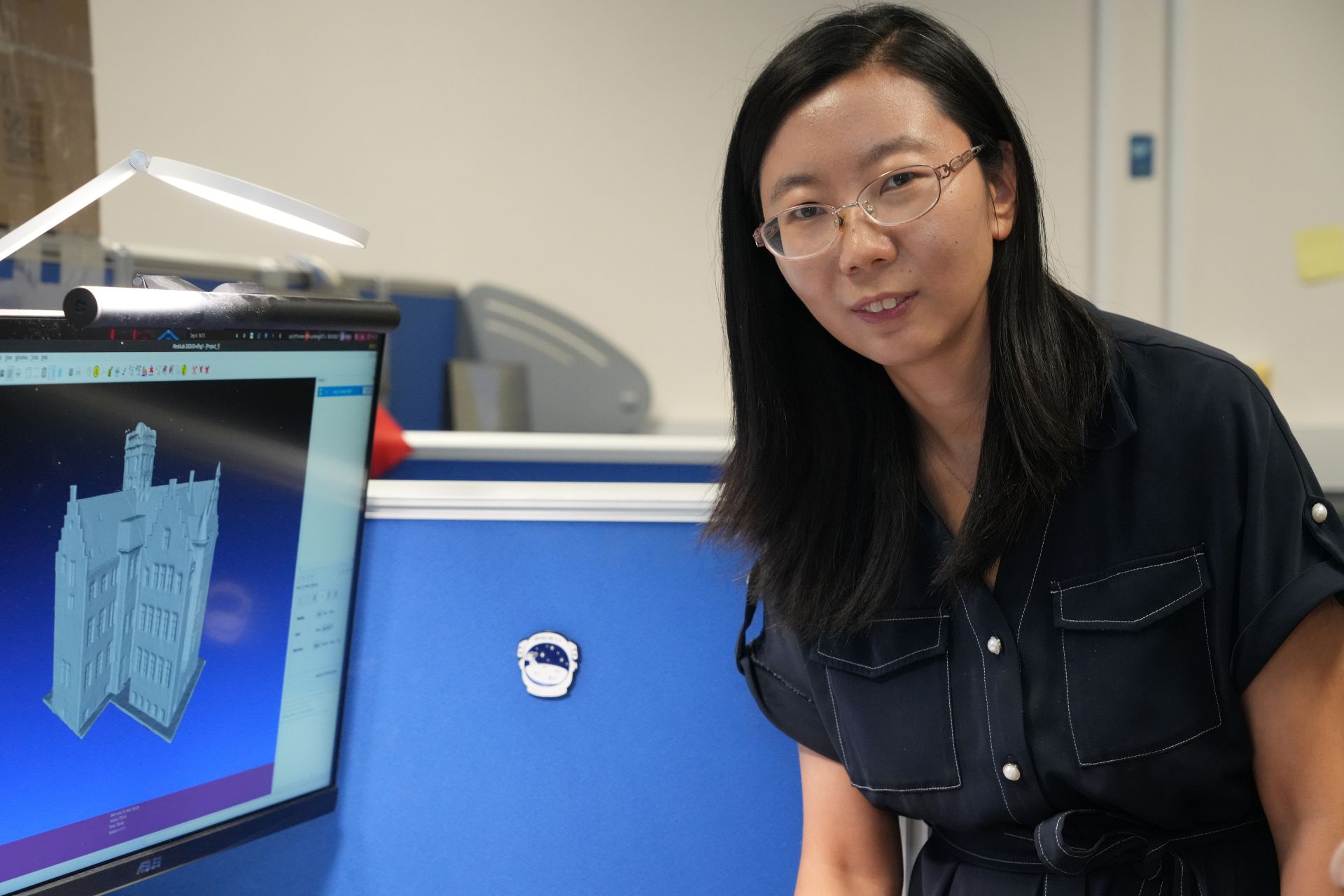

But for Professor Qi Zhao at The University of Hong Kong’s School of Computing and Data Science (CDS), the next leap in AI may come from a place far smaller than any silicon chip.

His research explores how quantum physics and machine learning can work together to create a new kind of intelligence — one that learns the way the universe learns.

From Theory to Computation

Zhao trained as a quantum information theorist, studying how data behaves when stored in particles rather than bits.

At HKU CDS, he leads a group that builds hybrid computing models combining classical algorithms with quantum processors.

His goal is simple to state but hard to achieve: use quantum systems to make AI faster, smarter, and more energy-efficient.

“Classical computers follow fixed paths,” he explains. “Quantum computers can explore many paths at once. That difference changes how learning works.”

Reimagining Computation

Traditional AI trains neural networks through repetition — adjusting parameters until patterns emerge.

Quantum computers take a different approach.

They rely on variational quantum algorithms, where a small quantum circuit learns by tuning itself with help from a classical controller.

Think of it as teamwork: the quantum part handles exploration; the classical part handles evaluation. Together, they solve problems that would take ordinary machines far longer to compute. Zhao’s team studies how this cooperation could transform optimization tasks, from image recognition to material design.

Quantum Machine Learning in Action

Inside his lab, AI helps control fragile quantum hardware.

Algorithms adjust pulse shapes, timing, and temperature to keep qubits stable. The system learns which conditions produce reliable results and adapts automatically when the environment changes. “It’s feedback learning in the truest sense,” Zhao says. “The machine is teaching itself how to stay coherent.”

These experiments do more than improve performance. They show how AI and quantum physics can enhance each other.

AI stabilizes quantum devices; quantum mechanics gives AI new mathematical tools for creativity and pattern discovery.

Learning from Quantum Data

Zhao believes that the next revolution will come when AI no longer just analyzes quantum data — it learns inside quantum data.

His group explores models where quantum systems perform the learning directly, finding relationships hidden from classical logic.

Such systems might recognize molecular structures or financial correlations beyond human intuition.

“This is where AI stops imitating intelligence,” he explains. “It begins to share it.”

Mentorship and Collaboration at CDS

As a mentor, Zhao encourages students to cross boundaries between physics and computer science.

He collaborates closely with Prof. Giulio Chiribella, Prof. Yuxiang Yang, and Prof. Ravi Ramanathan, creating a bridge between theory, experiment, and data science.

In class, he simplifies complex formulas into visual intuition. His students learn not only to code algorithms but also to think about why an algorithm works. “The most exciting discoveries,” he says, “often happen when we try to explain them simply.”

Looking Ahead: The Shape of Quantum Intelligence

Zhao imagines a future where AI systems powered by quantum hardware design drugs, manage energy grids, or simulate ecosystems in real time.

These machines will not replace human reasoning; they will extend it.

“Intelligence isn’t just logic,” he says. “It’s the ability to learn from limited information. That’s what quantum mechanics has been doing for billions of years.”

In his view, teaching machines to think quantumly is not just about computation — it’s about understanding learning itself.

And at HKU CDS, that journey has already begun.